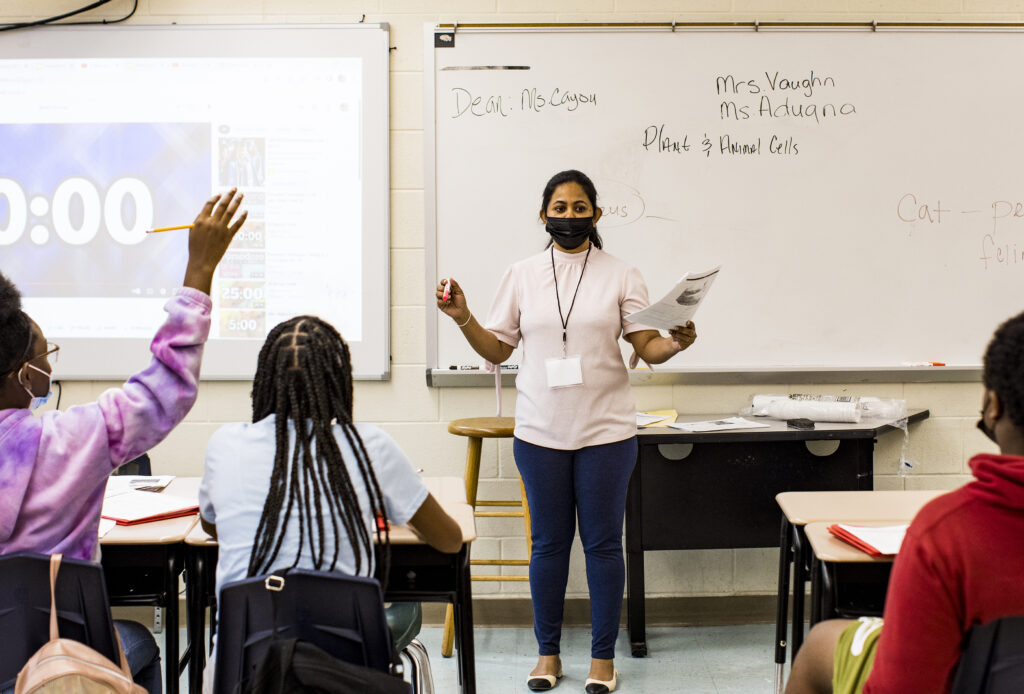

Coaching is a major part of how we train and support new teachers in the TNTP Teaching Fellows programs. Here on the blog, we’ve already written about what active coaching looks like in practice, and how a “bug in the ear” can help a new teacher learn in action.

So it’s hard to believe that just a few years ago, intensive coaching was a relatively small part of how we trained teachers. The overhauls we’ve made to our training model in recent years have all had one goal in mind: improve our support and preparation for new teachers, so Teaching Fellows perform better from day one in the classroom. Building our coaching model took time, capacity and resources, but we think it’s paying off: In our day-to-day observations, we see new teachers who look stronger in their classrooms, feel better supported and are happier.

But a gut instinct that intensive coaching works isn’t enough. If we’re going to make something a cornerstone of our model, we need to know it’s working.

So over the past school year, we collected data to find out if intensive coaching is paying off in terms of first-year teacher performance.

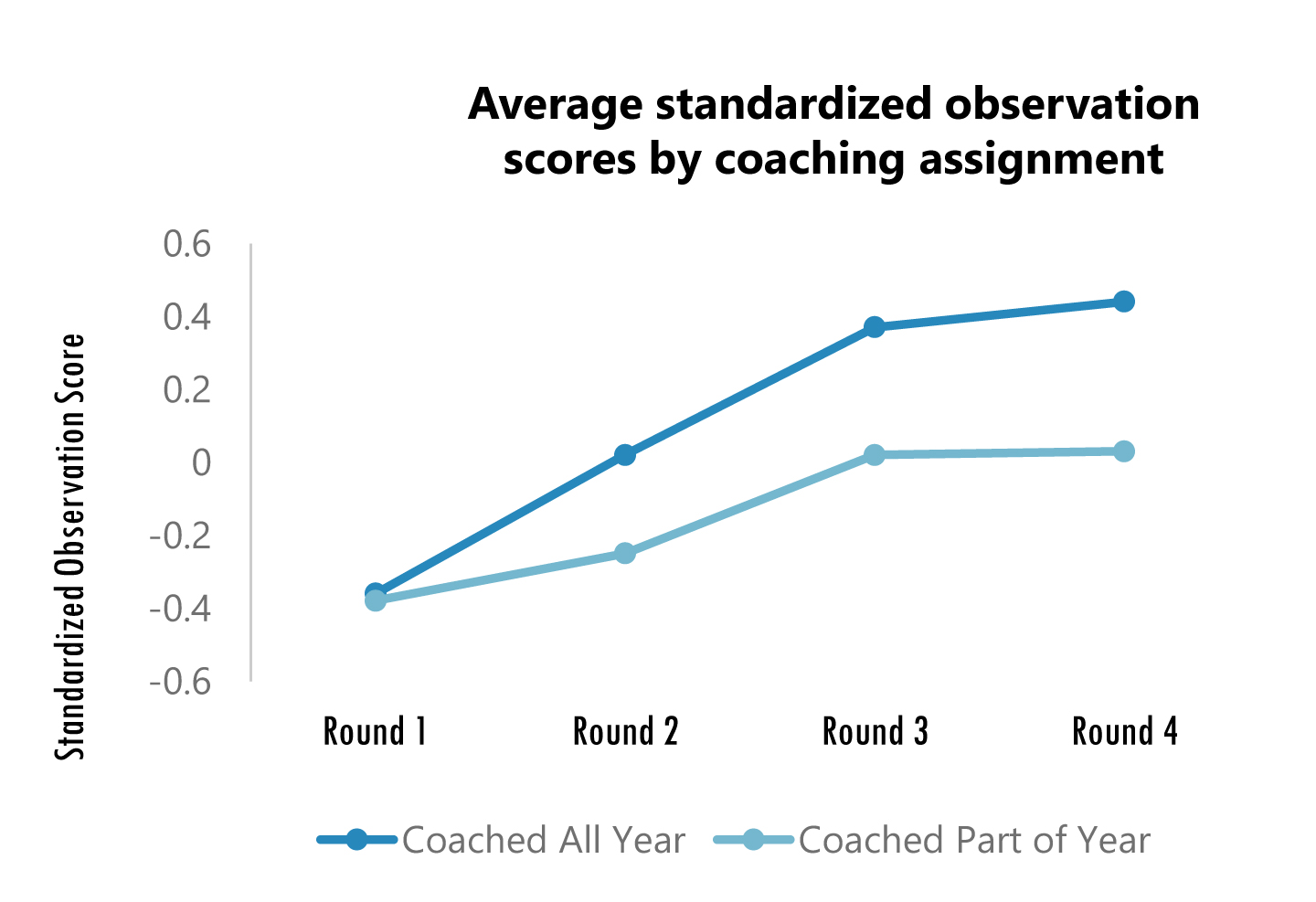

We thought about this question in two ways: First, we compared the performance of teachers who were coached for a full school year to those who were coached for just part of one. Then we explored the attitudes and performance of teachers who received virtual coaching, compared to those who received in-person coaching. Here’s what we found:

Our coaching model has a meaningful positive impact on first-year teacher performance. When we randomly assigned year-long coaches to some Fellows but not others, we found teachers coached the entire year earned consistently higher observation scores than those who were not—and that gap only widened over time.

The impact of virtual coaching is similar to the impact of in-person coaching. We monitored a subset of 10 Fellows who received virtual coaching and compared their results to those coached in person during the school year. Though the sample size is small, our results found that the method of coaching didn’t make much of a difference. Virtually coached Fellows actually tended to improve their observation scores more quickly and more substantially than teachers coached in person, and reported being equally satisfied with their coaching experiences.

Connecting content-specific seminars to feedback provided by coaches may improve teacher ability. We noticed that the coaching model used by most of our Teaching Fellows sites resulted in stronger growth on the classroom management and culture aspects of our teaching rubric than it did for improving new teachers’ instructional skills. To address this, we experimented at one site by improving the quality and depth of seminars we provided teachers on content-specific teaching techniques, and followed up with coaching focused on those techniques. Within this small sample size, we found that Fellows who received the redesigned, content-based seminars had consistently stronger observation results for both instructional and classroom management skills. Compared to teachers in the other seminars, this small subgroup gained the equivalent of almost an extra month of improvement in the first half of the year. We’ll have to collect more data to find out if those gains hold up over time, but we think the early data is promising.

Moving Forward. Fortunately, these results suggest we’re not just imagining things when we see our Teaching Fellows responding positively to frequent, focused coaching. They also suggest that it might not matter if a coach and a teacher are in the same room when they’re working together, opening up possibilities for making virtual coaching available on a larger scale. Our sample sizes are small so far, but we’ll continue to study our coaching models during this school year and consider how to adjust them based on the results.

At the end of the day, it isn’t good enough to try something new—it’s also important to dig into the data and find out if what we’re doing is actually helping teachers and students. We’re excited to continue to share what we learn, in the hope that our results will be helpful to others who are also trying to improve their support systems for new teachers.

This work was made possible in part by a 2010 Investing in Innovation (i3) grant from the U.S. Department of Education. i3’s support has allowed us to take TNTP Teaching Fellows programs to scale, so we can study promising approaches to developing great teachers. We are grateful to be part of the i3 program in its effort to find and foster what works in education.