Last year, I started coaching a principal at a struggling charter school whose challenges were immediately evident. Students were one or two grade levels behind in reading and math, and teacher attrition from year to year was high.

But one critical barrier to improvement wasn’t obvious, at least not right away. When I asked the principal for her take on the teachers’ talents, she assured me that 15 out of 20 were either effective or highly effective educators. In her view, the teachers were firing on all cylinders.

I was skeptical. And after a few visits and observing slow-moving lessons and compliant but disengaged kids, I was sure the principal was mistaken—only two or three of her teachers were effective. But until she saw what I did, she wouldn’t be able to coach individual teachers or provide the sort of instructional leadership that strong schools need to thrive.

Rampant Inflation

I encounter this all the time in my work supporting and coaching principals, many of whom overestimate the instruction their teachers are providing. The fancy term is “rating inflation,” and in the schools I visit, it can be rampant. Studies have found that while principals can usually tell who their very best and worst teachers are, they have trouble differentiating performance among the 90 percent in the middle.

This trend has implications for evaluation. Professional judgment is indispensable, but when it is inaccurate, it can do more harm than good, leading teachers to believe they are hitting the mark when they aren’t. My colleagues who help train and evaluate our first-year Teaching Fellows have experienced this phenomenon with the principals they work with, which we wrote about in our recent paper Leap Year. So as we push for better judgment from school leaders, we need to invest the time to keep them sharp.

I think that in many ways, rating inflation is human nature. Just like teaching, school leadership is a very personal endeavor. Principals are personally and professionally vested in their teachers’ success, and there is a lot of ego involved. For me as a leader to tell a teacher I’ve been supporting all year that she is not effective is not only difficult for her to hear, but also for me to admit. My support hasn’t helped enough. So could inflation be a defense mechanism of sorts? Probably.

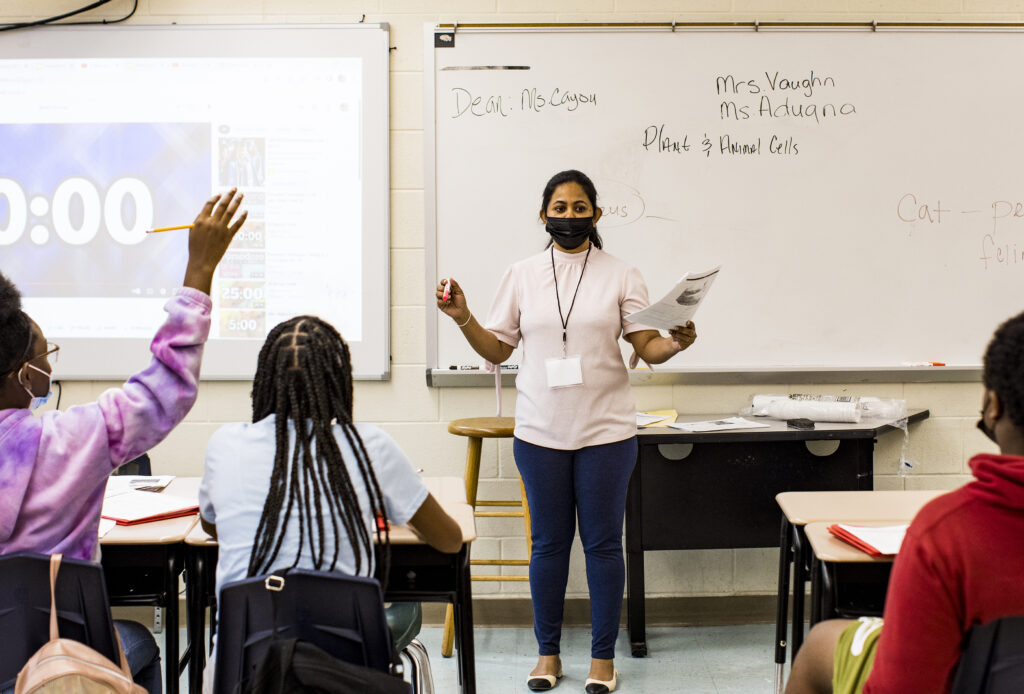

In addition, principals often spend too much time looking at what teachers do and not enough looking at what students do. Many focus almost exclusively on inputs: what is the teacher doing in front of students in the classroom, how strong of a lesson planner is he, how does his math content knowledge measure up? These are all important, but they are only a fraction of the story. The only way to see if anyone is actually learning is to look at students’ work.

Solutions

So how can we address this? Studying student outcomes is key—whether that’s formally including them among multiple measures in teacher evaluation systems, or reminding principals to check for learning by looking over students’ shoulders at quizzes and worksheets during routine classroom visits. Getting a second opinion on teacher performance can help, too: I’ve seen that in my coaching work, and the MET research also found that having multiple observers increases the accuracy of teacher ratings. I also think it’s important to name this issue for principals, so it’s something they can watch for.

These strategies were effective with the principal I worked with last year, even though—like many principals—she was not initially especially receptive to the idea that her ratings could be inflated. But her school’s performance was poor, and she was willing to stretch and try new things to improve. I was blunt in identifying rating inflation as an issue, and an external consultant also working with this school called it out, too. Then, I gave her a detailed critique of one teacher’s performance, bringing out some nuances and missed instructional opportunities to demonstrate the type of teaching I know can really help students leap ahead.

After that, she allowed me to join her on classroom visits, where I guided her to check for student understanding. She counted how many children were responding correctly to teachers’ questions and looked over students’ shoulders at their worksheets to count up how many of their answers were right and wrong. That became an explicit part of her observations, as it is for all of the principals we coach.

After we compared notes and included student work in our reviews, she substantially revised teachers’ performance ratings and landed on a more realistic bell curve. She also started to identify specific areas of strength and weaknesses for several teachers.

This is very much a work in progress. For that principal to turn her school around, she’ll have to learn not only how to accurately rate her teachers’ performance, but also how to use those ratings to support their development and make smart decisions about retention and recruitment. But I think the growth she experienced in just one year shows how quickly principals can themselves improve, once they have the right information at hand.